Instrumenting Node.js code with Prometheus custom metrics

Automatic instrumentation is great, but to get the most out of your monitoring you often need to instrument your code. In this article I am going to explain how to instrument a Node.js express app with custom metrics using the Prometheus prom-client package.

Although this article specifically addresses Node.js and Express, my hope is that the general concepts are applicable to other languages too.

What is 'instrumenting code'?

I'm a big proponent of observing not just technical metrics like CPU usage or queue length, but business and customer metrics. For example, the number of customers who logged into your app each hour. Or the conversion rate of customers who visit your website versus those who go on to purchase a product. But how on earth are our monitoring tools supposed to know about this context?

The answer is that in most cases we need to expose this data ourselves in our code. This is called instrumentation of code. This can include annotating the code with metadata, or adding in logic to calculate and expose data.

In a nutshell, instrumenting code means we write code to expose information about the technical, business, and customer context. This information can be collected and analyzed by our monitoring tools.

What is Prometheus?

Prometheus is an open source metrics collection and storage solution. It has been widely adopted across the industry.

Prometheus is specifically designed for capturing metrics. It is not used for collecting logs (i.e. raw events) or distributed traces. There's plenty of content online discussing the differences between metrics, logs, and traces so I won't cover that here.

The most common way Prometheus ingests metric data is to scrape a metrics endpoint.

A metrics endpoint is a standard way for an app or service to expose metrics. Many apps expose metrics out of the box just by navigating to http://<your-app>/metrics and the content is just a plain text file of metric names and values. Here's a made up and simplified example of a /metrics endpoint that returns just one metric:

# HELP cpu_total_seconds Total CPU time spent in seconds

# TYPE cpu_total_seconds counter

cpu_total_seconds{host="myserver"} 0.951This kind of data isn't very useful by itself, we need to ingest it and run calculations on it to make sense of what it is telling us. That's where Prometheus comes in.

We configure Prometheus to scrape a metrics endpoint like this, which just means it reads in all the data periodically and stores it in its time-series database. Once the data is in the database, we can query it to (hopefully) gain insight about what is going on.

What about when we write our own code though? How do we export a metrics endpoint? The answer is that most common programming languages contain a Prometheus SDK (library or package) that you can import and use to collect and expose metrics. This is exactly what we are going to do in this article using the prom-client package for Node.js.

Installing the prom-client package

This is very straightforward. Firstly we use npm to install the prom-client package:

npm install prom-client --saveThen we need to add the following declarations in whatever Node.js source file you plan on adding your instrumentation to:

const Prometheus = require('prom-client');

const register = new Prometheus.Registry();This creates an instance of prom-client for us to use, and then defines a metrics registry. The registry is a list of metrics that prom-client is collecting.

Node.js default metrics

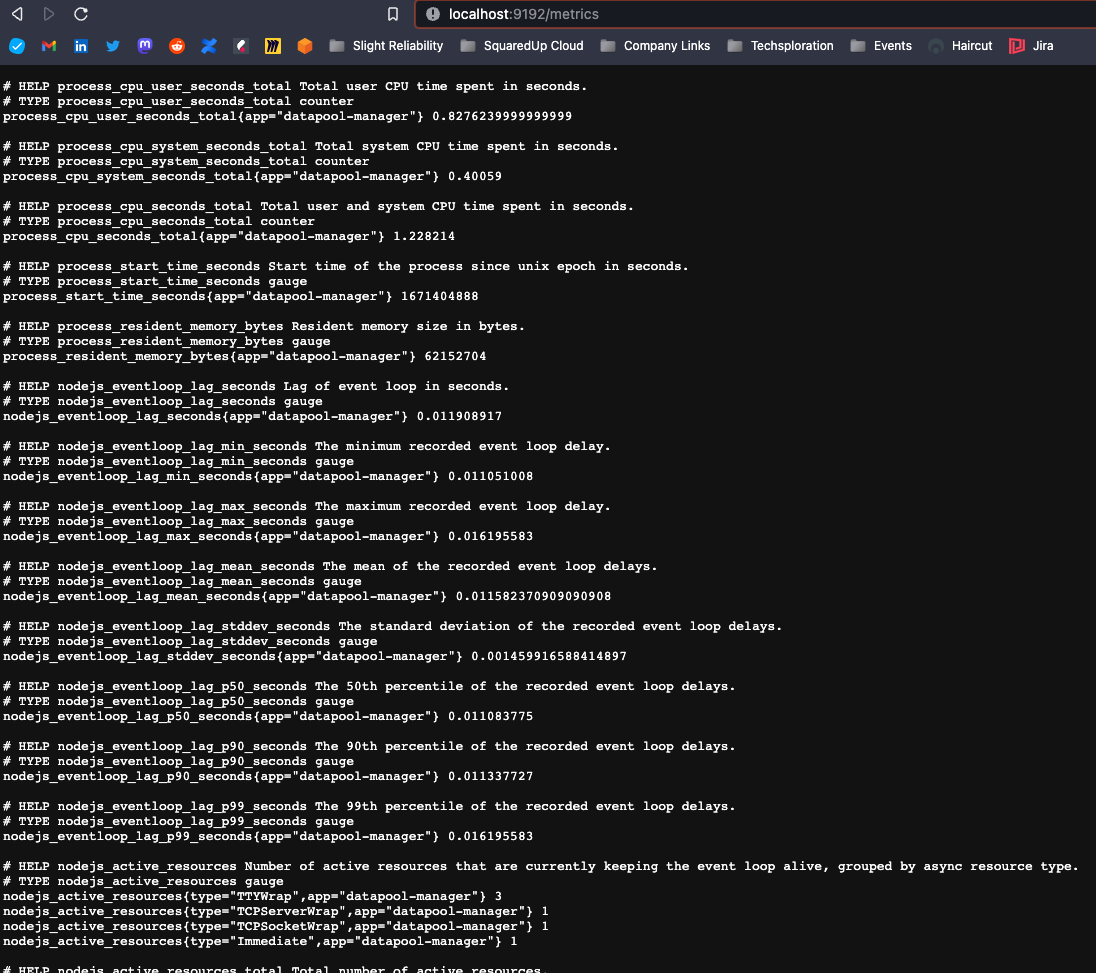

The prom-client package comes with a series of default Node.js metrics that it can collect. This means we can expose some information about how our Node.js app is running without having to manually define any custom metrics. This is that "auto-instrumentation" I mentioned in the introduction.

To collect these default metrics, we need to add these to our metrics registry and tell Prometheus to collect them:

register.setDefaultLabels({

app: 'your-app-name'

})

Prometheus.collectDefaultMetrics({register})Then we need to expose an HTTP metrics endpoint so that we can get at the metrics. My app uses the express package in which you define different "routes" (paths to services). I added a /metrics route which exposes all the metrics that are currently registered using the following code:

router.get('/metrics', function(req, res)

{

res.setHeader('Content-Type',register.contentType)

register.metrics().then(data => res.status(200).send(data))

});When I run my app now and navigate to /metrics in a web browser I see these default Node.js metrics:

This is a great first step, we are exposing some information about how our Node.js app is running. Unfortunately, I don't find this information particularly useful. This is purely technical information about resource usage and internal timings...what if I want to expose something else?

Defining a custom counter

A counter is the simplest Prometheus data type. It is a value that can only be increased over time. You use PromQL functions like increase() to track how counters change over time to create useful graphs.

For my app, I would like to track how many HTTP requests it receives. In other words, the traffic hitting my service (one of the four golden signals). I'd like to be able to filter this by:

- The route taken (i.e. the specific URL requested)

- The status code of the response (e.g. 200 "OK" or 404 "Not Found")

- The method of the request (e.g. GET, POST, or PUT)

To achieve this we first need to define our custom counter and add it to the metrics registry:

const http_request_counter = new Prometheus.Counter({

name: 'myapp_http_request_count',

help: 'Count of HTTP requests made to my app',

labelNames: ['method', 'route', 'statusCode'],

});

register.registerMetric(http_request_counter);You'll notice I've defined three labels for the method, status code, and route (as per my requirements). Labels are dimensions that we can add to our metrics to help with our PromQL queries later.

Next we need to increment this counter whenever a request is made to our app. It will depend on the package you are using to handle HTTP requests but, in my app, there is a code block that is called every time a request is made (regardless of the route). Here is the block, along with the line of code which increments the counter:

router.use(function(req, res, next)

{

// Increment the HTTP request counter

http_request_counter.labels({method: req.method, route: req.originalUrl, statusCode: res.statusCode}).inc();

next();

}The inc() function is what increments the counter. The rest of the line is setting the value of each label dynamically based on the request that was made to the app.

When I run my app now and navigate to /metrics in a browser I see something like:

# HELP myapp_http_request_count Count of HTTP requests made to my app

# TYPE myapp_http_request_count counter

myapp_http_request_count{method="GET",route="/homepage",statusCode="200",app="myapp"} 5

myapp_http_request_count{method="GET",route="/metrics",statusCode="200",app="myapp"} 3The data above tells me that, since starting the app, I have requested /homepage 5 times and /metrics 3 times.

If you've followed along with me so far, well done! You've just instrumented your code with a custom metric.

Defining a custom histogram

What if I wanted to track how long each HTTP request is taking? In other words, the response time of each route. This is another one of the four golden signals (latency).

To do this we need a different Prometheus data type, a counter isn't going to cut it. A histogram is a Prometheus data type and is ideal for this purpose, but just to warn you, it's a little more complicated than a counter.

The histogram data type keeps track of how many recorded values occur within a set of boundaries (called "buckets"). It also keeps track of the count of measurements and the sum of total values. Prometheus can then utilize these numbers to estimate percentiles and provide averages later.

I want to keep track of how long it takes to call my APIs using a histogram. I know from experience that my services take only a few milliseconds on average, so I want to define buckets at 1ms, 2ms, 3ms, 4ms, 5ms, 10ms, 25ms, 50ms, 100ms, 250ms, and 1s.

To define my custom histogram metric with the above buckets and to register the metric in the metric registry, I use the following code:

const http_request_duration_milliseconds = new Prometheus.Histogram({

name: 'myapp_http_request_duration_milliseconds',

help: 'Duration of HTTP requests in milliseconds.',

labelNames: ['method', 'route', 'code'],

buckets: [1,2,3,4,5,10,25,50,100,250,500,1000],

})

register.registerMetric(http_request_duration_milliseconds);There's no magic to automatically figure out how long our requests are taking, instead we are going to add a timer and measure it ourselves.

The first thing we want to do is start a timer whenever a request is first received. Where you put this will depend on how you've implemented your service, but for me it was the same code block I referenced above:

router.use(function(req, res, next)

{

// Start a timer for every request made

res.locals.startEpoch = Date.now();

next();

}It stores the current time (as a 13-digit epoch timestamp) in a variable called startEpoch which is attached to our response object.

Now we need to capture the time again after the logic of our route is complete, take the difference, and report the result. In my code I couldn't find a way to write the code once and have it applied to all routes. Instead, I had to add the following code at the end of every route that I wanted to time:

const responseTimeInMilliseconds = Date.now() - res.locals.startEpoch;

http_request_duration_milliseconds

.labels(req.method, req.route.path, res.statusCode)

.observe(responseTimeInMilliseconds)The first line takes a snapshot of the current time and takes away the time that the request started. The result is the processing time in milliseconds.

The second line reports our timing to prom-client.

What does the histogram data look like?

I ran my app with the changes above and requested the /metrics route four times in a row:

- The first request took 1 millisecond

- The second request took 3 milliseconds

- The third request took 3 milliseconds

- The fourth request took 2 milliseconds

If I look at the /metrics endpoint, this is what I see:

# HELP myapp_http_request_duration_milliseconds Duration of HTTP requests in milliseconds.

# TYPE myapp_http_request_duration_milliseconds histogram

myapp_http_request_duration_milliseconds_bucket{le="1",method="GET",route="/metrics",code="200",app="datapool-manager"} 1

myapp_http_request_duration_milliseconds_bucket{le="2",method="GET",route="/metrics",code="200",app="datapool-manager"} 1

myapp_http_request_duration_milliseconds_bucket{le="3",method="GET",route="/metrics",code="200",app="datapool-manager"} 3

myapp_http_request_duration_milliseconds_bucket{le="4",method="GET",route="/metrics",code="200",app="datapool-manager"} 4

myapp_http_request_duration_milliseconds_bucket{le="5",method="GET",route="/metrics",code="200",app="datapool-manager"} 4

myapp_http_request_duration_milliseconds_bucket{le="10",method="GET",route="/metrics",code="200",app="datapool-manager"} 4

myapp_http_request_duration_milliseconds_bucket{le="25",method="GET",route="/metrics",code="200",app="datapool-manager"} 4

myapp_http_request_duration_milliseconds_bucket{le="50",method="GET",route="/metrics",code="200",app="datapool-manager"} 4

myapp_http_request_duration_milliseconds_bucket{le="100",method="GET",route="/metrics",code="200",app="datapool-manager"} 4

myapp_http_request_duration_milliseconds_bucket{le="250",method="GET",route="/metrics",code="200",app="datapool-manager"} 4

myapp_http_request_duration_milliseconds_bucket{le="500",method="GET",route="/metrics",code="200",app="datapool-manager"} 4

myapp_http_request_duration_milliseconds_bucket{le="1000",method="GET",route="/metrics",code="200",app="datapool-manager"} 4

myapp_http_request_duration_milliseconds_bucket{le="+Inf",method="GET",route="/metrics",code="200",app="datapool-manager"} 4

myapp_http_request_duration_milliseconds_sum{method="GET",route="/metrics",code="200",app="datapool-manager"} 11

myapp_http_request_duration_milliseconds_count{method="GET",route="/metrics",code="200",app="datapool-manager"} 4In the last two lines we can see the count of requests is 4 and the sum of the values is 11 (1+4+3+3). Then the other lines tell us the count which sat within each boundary/bucket (which is shown by the label "le"). For example:

- 4 requests took 50 milliseconds or less

- 3 requests took 3 milliseconds or less

- 1 request took 2 milliseconds or less

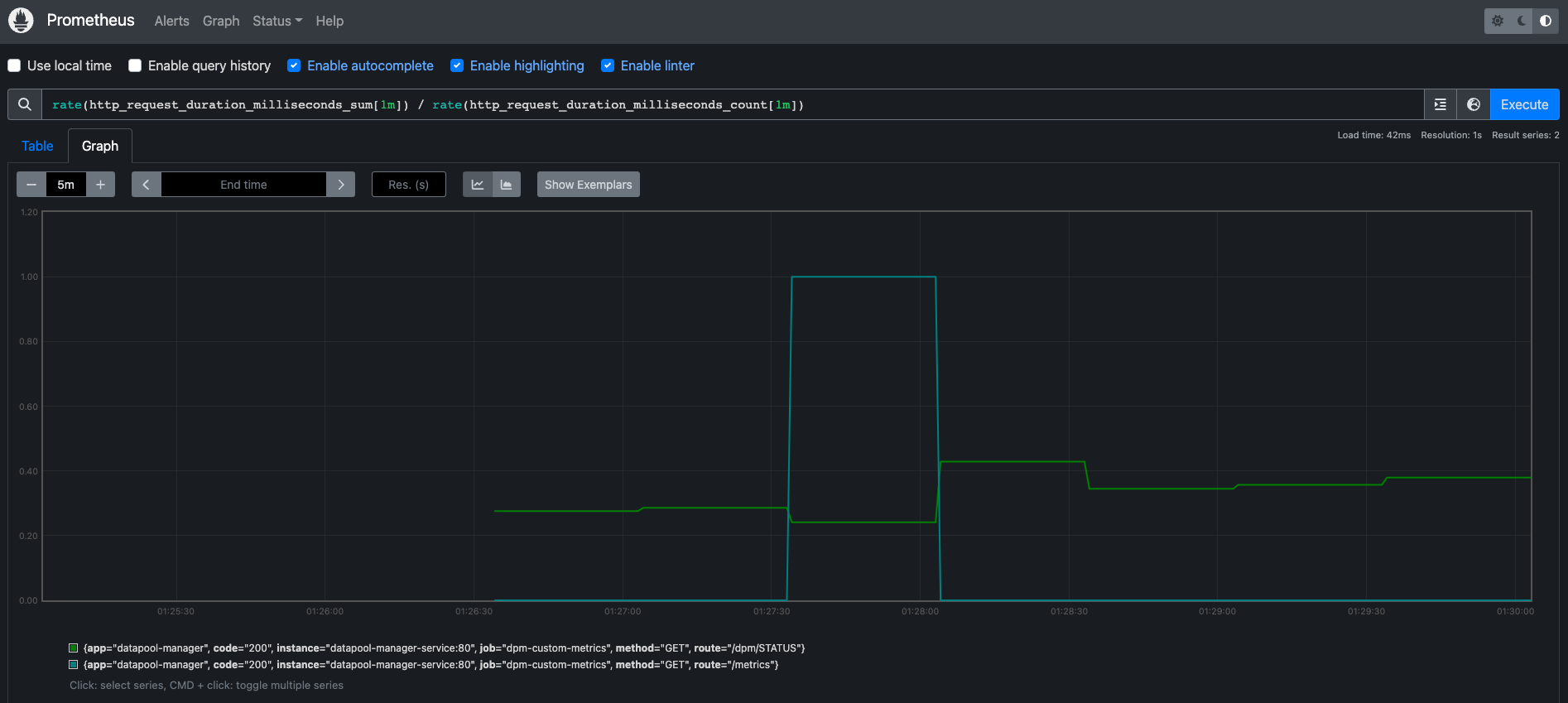

Congratulations, you've now instrumented your second custom metric! Once you start ingesting this in Prometheus you could use a PromQL query such as:

rate(myapp_http_request_duration_milliseconds_sum[1m]) / rate(myapp_http_request_duration_milliseconds_count[1m])...to show the average response time for each one minute interval:

You could also use:

histogram_quantile(0.9, rate(myapp_http_request_duration_milliseconds_bucket[1m]))...to show the (approximate) 90th percentile response time of each route. In my case, my requests were generally taking 1-2 milliseconds which meant they all fell in the same bucket. This was giving me inaccurate results. I will need to adjust my buckets, potentially to include fractions of milliseconds.

A few words on percentiles...

Prometheus histograms are not recording the raw data, and any percentiles calculated from them are only an approximation. Keep this in mind when interpreting the results.

Also remember that it doesn't make sense to take a percentile when there are less than a hundred samples. To have meaningful numbers you ideally want a large sample size (at least in the thousands). I generated some load using a simple test script built in Apache JMeter in order to test out my instrumentation.

Summary

Taking the step beyond using what comes out of the box with your monitoring tools and going in and instrumenting code is a big one. You need to understand the code you are instrumenting, as well as the libraries you are leveraging for your instrumentation. You also need a clear understanding of statistics and what the numbers you are collecting mean (and do not mean).

This is a very specific example using one flavour of one language. I'm hoping to experiment with instrumentation of other languages and using other tools (beyond Prometheus) in the future. However, I hope even this specific example gives you an idea that instrumenting code is achievable and the Prometheus SDK is not difficult to get started with.

Related plugins

Prometheus

Monitor metrics from Prometheus using custom PromQL queries.

SquaredUp has 60+ pre-built plugins for instant access to data.